Just finished reading AI Superpowers by Kai-Fu Lee. Lee has the credibility to speak on this topic, with a PhD from CMU on speech recognition, he also ran research, engineering, and businesses for Apple, Microsoft, and Google related to AI, and now rhe uns a VC firm that funds AI startups.

The book is easy to read. It has 2 threads:

- China vs US development of AI.

- The U.S., as well as UK’s DeepMind (subsidiary of Google), continues to produce deep neural network innovations and has deep technical expertise in DNN and AI.

- Lee forecasts that China might have the advantage, due to government funding and initiatives, aggressive hard-working entrepreneurs, technical knowhow, market driven implementations, and enormous amount of data from large population and high internet and mobile deployment in China. One key mention is the Chinese government’s push on the so-called Mass Entrepreneurship and Innovation program. This program is recently upgraded.

- Dealing with the impending disruption from AI.

- Narrow AI vs Artifical General Intelligence (AGI). All the extraordinary improvements in AI are narrow in scope, where the problem is solving one aspect of intelligence: image recognition, speech recognition, playing GO. So the threat of the Terminator remains in the movies.

- However, narrow AI’s are very good, and would displaced a lot of jobs (up to 50%) in the next 10+ years. AI would solve many problems and make a portion of the work humans do much more efficient. The consequence would be economic growth and life improvements. But not for all, where wealth disparity of today would be amplified by AI.

- Lee’s proposal is we recognize that our social relationships and emotions (e.g. love and compassion) could not be replicated by AI. While humans use AI to perform tasks such as medical diagnostics, financial management, insurance adjustments, self driving car, …, humans add the compassion to enhance society. This would require some restructuring of social contract, where people’s lives and self worth are no longer defined by his/her jobs.

I found much of the book very illuminating, until this last part. It’s an interesting conjecture. Maybe more specifics could be added on how this change could be implemented or somehow people would settled onto this solution to the AI conundrum.

The forecast of AI disruption is likely to be real. The book did not provide many parameters on the AI disruption that would predict its consequences and develop the right response. Lee wrote about how lymphoma are classified into different types by just a few large features, primarily because humans could only deal with these few features. However, to better determine the nature of his own lymphoma, he researched into many more small features, which led him to have a more optimal response and action. I would say the book’s forecasting of AI disruption is analogous to the lymphoma situation: the book did not have enough features to provide a sufficient forecast of future outcomes that would lead to an optimal response. The suggested response in the book is an interesting suggestion, but not a readily implementable solution.

Nevertheless, the first 80% of the book offers great insight into current AI development, especially in regard to China vs US.

Below is good interview of Kai-Fu Lee with Sebastian Thrun:

I just finished reading this book

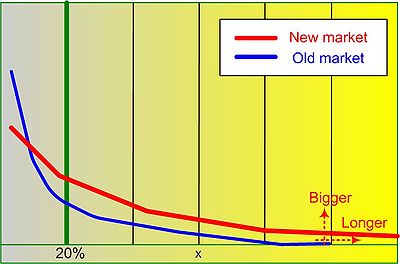

I just finished reading this book  by the high-volume products. It is often represented as a the

by the high-volume products. It is often represented as a the  The

The

You must be logged in to post a comment.